Big Model Controversy Series #5 Every Language Deserves Its Own Large Model

For silicon-based entities, all languages and modalities might map onto a common vector space, but for humans, unifying the world’s 7,000 languages involves complex issues of thought, culture, and ……

An intriguing viewpoint surfaces in a recent discussion: the concept of a large-scale Chinese language model is deemed a “pseudo-proposition,” suggesting that an overemphasis on language could lead these models astray. The argument hinges on two reasons:

**Cross-Language Mastery**: It’s argued that large models have already mastered cross-linguistic challenges, akin to building the mythical Tower of Babel. Language barriers are seen as a conquered frontier, with these models now delving into deeper realms like logical reasoning and complex task handling. Thus, prioritizing multilingual capabilities is considered redundant.

2. **Dominance of English in Quality Information**: The global dissemination of high-quality research predominantly in English is highlighted. The varying quality of Chinese data, reportedly constituting over 15% of some datasets (source unspecified), is seen as potentially detrimental to the overall efficacy of these large models. The conclusion drawn is that focusing on Chinese data and test sets could misguide the development path of these models.

Contrasting this viewpoint, another perspective emerges: While leveraging English data, or even synthetic data generated by models like GPT, is an efficient short-term strategy in competing with models like GPT-3.5 and GPT-4, the long-term necessity of a large-scale Chinese model is emphasized.

The core of this argument revolves around the intrinsic connection between language, thought patterns, culture, and values. Chinese characters, their radicals, and phonetics are deeply intertwined with Chinese thought and culture, serving as carriers of value systems.

It’s pointed out that in GPT-3's training data, English constitutes a staggering 92.6%, with Chinese merely at 0.99%. This disparity is seen as a precarious start not only for Chinese but for other languages as well. The further reinforcement of English could lead to the decline of other languages and cultures, posing a threat to the global linguistic and cultural diversity.

The necessity for diverse models fostering varied worldviews is likened to the ecological principle that a rich and diverse species distribution signifies a healthy and thriving ecosystem. Large language models could influence the rise or fall of languages, warranting the development of models for each linguistic group. Especially for Chinese, intensifying efforts in Chinese language models is crucial to maintain or even elevate its status.

From another angle, the claim that the Babel has been fully realized is contested. For silicon-based entities, all languages and modalities might map onto a common vector space, but for humans, unifying the world’s 7,000 languages involves complex issues of thought, culture, and belief.

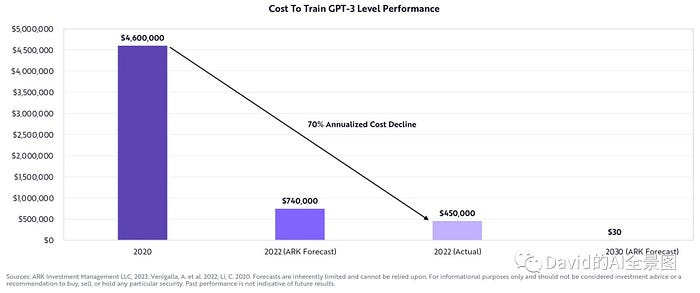

Finally, considering the rapidly decreasing costs of training large models and the plateauing of human language text data, it’s argued that developing individual models for each language is now feasible from a cost perspective.